Way back when AOL was a big tech company and people reached the World Wide Web via dial-up modems, Congress added a provision to federal law that has had a profound effect on every aspect of our democracy and public life. It’s called Section 230 of the Communication Decency Act, and it ruled that internet platforms, or message boards as they then were largely called, are not legally liable for false or defamatory information posted by users.

Although no one could have imagined it at the time, the 1996 legislation made possible the explosive growth of the modern internet. Freed from the threat of being sued for libel, Facebook, Twitter, Reddit and other corners of cyberspace became places where literally billions of people felt free to say whatever they wanted, from robust political disputes to false accusations of horrific acts to the spread of disinformation and lies. People often wrote actionable things about others but were seldom, if ever, sued personally for what they had said, the only recourse allowed under the new law. Also, individuals were less attractive targets for costly lawsuits than wealthy corporations.

The protection from legal liability proved essential to the explosive growth of the internet platforms, allowing them to remove posts that contained hate speech and other graphic material that might drive away users or advertisers. But at the same time, they did not have to read, research and “moderate,” in their terminology, every vituperative, spite-laced statement put on their sites by users.

Jeff Kosseff, a former reporter turned lawyer and legal scholar, has emerged as one of the leading experts on the 1996 law and is author of the aptly titled book “The Twenty-Six Words That Created the Internet.” With a growing impetus for both political parties to do something to improve Section 230, I connected with Kosseff recently to get his thoughts. (Full disclosure: When I was a managing editor at the Oregonian back in the early aughts, he covered telecommunications for the paper and eventually became its Washington correspondent.)

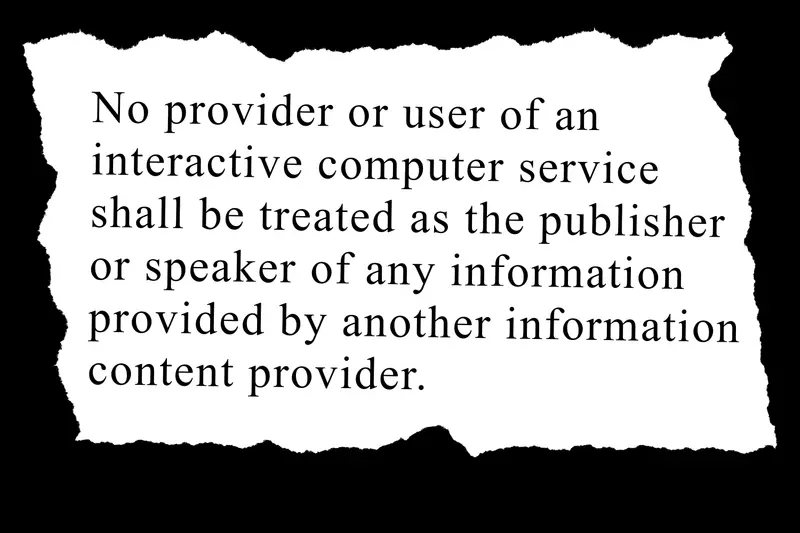

For those of you not steeped in press and communications law, here are the 26 words. They’re not exactly felicitous.

“No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.”

How did you get into this seemingly obscure subject?

It’s funny. [Oregon Sen. Ron] Wyden was one of the people who wrote Section 230, but when I covered him on a daily basis for about five years, he never mentioned this to me. It wasn’t until I started practicing law: I would represent media companies that had mostly local news outlets, but would have user comments on their websites. We would occasionally get complaints from people who wanted us to remove the user comments. And I quickly learned that I just needed to send them a one-page letter citing this thing called Section 230, and they would magically go away. I thought that was pretty remarkable, and I was just really intrigued by how we had something in the law that let me tell people to go buzz off.

Were there unexpected discoveries in your research?

The most surprising thing was that this law passed with almost no attention whatsoever. It was folded into the Telecommunications Act of 1996. All of the media coverage at the time was about things like long-distance telephone competition, because that’s where the lobbyists were. Nobody really noticed that there was this liability protection [that] had been put in. At the time, this was really about Prodigy and AOL, but no one really cared, because no one really thought very much about what the impact would be of making Prodigy immune from tort lawsuits. There was a little coverage of the fact that [Congress was] basically shielding companies from almost any liability whatsoever. It took quite a few years before people started to see what Section 230 actually did.

Could the platforms that we all know so well — Twitter, Facebook, Instagram, for starters — exist without Section 230?

No, they’d have looked very different without Section 230. Without it, they would either have to prescreen content, which would basically upend their business model, or, as the liberals would like, they would have to remove any user content as soon as they received a complaint. That would make it much more difficult to operate a site like Yelp, which relies on having negative user content that people that it’s about would want taken down.

In recent years, we’ve seen the dangers of conspiracy theories spreading unchecked across the internet, from QAnon to anti-vaxxers. There are voices that are saying: “Can’t we have a little more regulation here? Let’s tweak Section 230.” Do you think that’s possible? And what might it look like if Congress tried?

I think it’s possible to reform Section 230. But there are a few barriers. First, we haven’t agreed on what the problem is. There are very different conceptions of what is wrong. Half of Washington thinks that there should be more moderation and that the platforms should be more restrictive. The other half thinks that the platforms need to be less restrictive, and in many cases say they shouldn’t do any moderation at all.

Also there’s this: If you’re saying that there should be more moderation and less harmful content, the big problem with that is that we still have the First Amendment.

Meaning?

There’s a lot of stuff that is lawful, but awful, and the government can’t regulate that away with or without Section 230. Section 230, in fact, gives the platforms more flexibility to moderate, because it was driven by this really bad New York State court ruling in 1995, which said that if platforms did any moderation at all, they’d become liable for everything posted on their services.

You’re referring to the origin story of Section 230, which strangely takes us back to the movie “The Wolf of Wall Street,” in which Leonardo DiCaprio plays Jordan Belfort, the owner of a brokerage firm called Stratton Oakmont, which specialized in “pump and dump” schemes. Stratton Oakmont would buy certain stocks cheap and talk up their value to unsuspecting investors — the “pump.” They would then “dump” them before the price collapsed.

Yes. The firm that was portrayed, or at least was the inspiration for “The Wolf of Wall Street,” was Stratton Oakmont. An anonymous user on Prodigy had posted something on a user forum accusing [Stratton Oakmont] of committing various fraudulent acts. Stratton Oakmont sued Prodigy for $200 million in state court [on] Long Island. And what the judge said was that unlike some of its competitors like CompuServe, which did no moderation at all, Prodigy had engaged in some moderation. The company did things like filter out pornography and prevent children from seeing indecent content. Because Prodigy had done some of that, but failed to block these messages about Stratton Oakmont, the judge ruled that Prodigy was just as liable for any of the defamatory content as the person who wrote it.

What this basically meant is that the way to shield yourself from liability if you were a platform or an online service was to do no moderation at all. That started to really freak people out, because this was in 1995 when Time and Newsweek were running these really sensationalist cover stories about cyber pornography. They reported that there was pornography widely available on the internet, and it was going to corrupt every child. At this time of panic, there was speculation that because of this case, you would have Prodigy and AOL and CompuServe saying: “We can’t do any moderation at all.”

So, one of the motivations for Section 230 was to overrule this Prodigy case and to say that it’s completely up to the platform how much they moderate. They could moderate everything, or they could moderate nothing, and they still won’t be liable. It was very much a market-based idea. The services will develop a moderation approach that best meets the needs of their consumers. If they do too much or too little, the consumers will walk away.

It’s interesting that Section 230 was the work of Sen. Wyden, a pro-regulation Democrat, and Rep. Christopher Cox, a very market-driven Republican. Wyden seems to have been persuaded that the best way to contain the possible excesses of speech on the internet was the influence of market forces.

That’s true. They also both represented areas of the country that people don’t normally think of as tech districts, but they both had a heavy tech presence: Oregon and Orange County, California. They saw a lot of commercial potential in the internet, and they wanted to ensure that it wasn’t burdened by a ton of litigation and regulation.

Section 230 has put a lot of power in the hands of a few corporate leaders. Over the past few months, there’s been enormous pressure on Twitter to stop President Trump — now former President Trump — from spreading lies to his millions of followers. And it seems that Twitter’s decision to deprive him of Twitter access did change the national conversation, at least somewhat. Still, I’m uneasy about the notion that the current law gives Jack Dorsey or a senior Twitter official the right to decide where the president of the United States gets heard.

Yes, it’s kind of scary, because even if you agree with what Twitter did in this case, you could envision a situation where you disagree with the next decision it might make.

You have one person in the private sector who’s not elected by anyone who can effectively decide whether someone can speak. So that’s the bad part about it. The good part about it, if you would call it that, is that it is impossible to imagine how the government would be able to block a lot of this harmful speech. Because the private sector is not bound by the First Amendment, companies actually do have more leeway to act in the public interest.

If you relied only on the government [to decide what goes on the internet] because [of] the broad interpretation of the First Amendment that the courts have had, there’s a lot of really terrible stuff that people would be exposed to. I’ve spoken with fairly high level public officials who tell me, “I just want an internet with no moderation at all. I don’t want the platforms to get involved.”

I think that’s insane. The internet would be unusable if there was no moderation. It would be filled with the worst of humanity. When you sit down with anyone who’s actually moderated content for any of the big social media platforms, I mean, they’re dealing with beheading videos, they’re dealing with child sex abuse material. I think that the problem is that in some cases, not in those extreme cases, there’s speech that one person might say is legitimate and should be up, but others say it should be taken down. A lot of that is around the hate speech area. It’s both hate speech and some of the misinformation where we really have the more heated debates.

Shouldn’t there be a ban on completely wrong information on subjects like, say, vaccination, in which the spread of lies can cost people their lives?

One of the issues becomes who determines whether it’s misinformation. I mean, there is someone who used to be a reporter at a news outlet that you used to work for [The New York Times]. He’s built quite a following of questioning what the government says about COVID. There are some people who would say he should not have a platform on social media because they disagree strongly with how he interprets the data. Others say that he’s a crucial dissenting voice.

In your view, why hasn’t the free market concept behind Section 230 worked out better? Why doesn’t consumer choice act as a brake on the internet?

I think the free-market vision is still valid in many respects. The problem is not as much of a legal one as an economic one. The economic theory of network effects holds that a product or service becomes more valuable when you acquire more and more users. So Twitter or Facebook aren’t terribly valuable to people if they only have 50,000 users, but [if] they have a billion or two billion users, then it’s very valuable. A large part of the value comes from the number of other people on it. That ends up leading to a really massive consolidation around a few platforms. And I don’t think that was necessarily anticipated when Section 230 was passed, but you have that now.

There’s no easy way to deal with it. People keep saying: “Break up Facebook and Instagram." And I think, sure, you can do that, but that’s not really what’s driving a lot of this. It’s the fact that people have migrated to very few platforms because it makes sense to go where your friends and family are.

And then one guy, be he Mark Zuckerberg, Jack Dorsey or whoever, gets to decide who gets heard.

You can understand that this gets complicated in a democracy in which publicly held companies might make decisions that maximize their profits but are not in the public interest.

We had similar problems back in the days when newspapers had monopolies. At that point, you had even less of an opportunity to get your viewpoint out because you had to hope that someone would write a story or broadcast a story, decisions that were controlled by a few massive companies.

What about the future? There will be pressure from both sides of the aisle to “fix” Section 230. What do you think will happen?

I would have a better idea if there was some agreement on what problem we want to fix. Both sides are to a certain extent under the illusion if you got rid of Section 230, that would magically fix all of their problems.

I worry that the compromise is going to be this jumbled-together mess of a bill that does not make any sense. That kind of happened a few years ago with the sex trafficking. They amended Section 230 to create an exception for sex trafficking. I testified in the House in favor of a narrow amendment, but what they ended up doing was they cobbled together this amendment that when you read it, it literally does not make sense. It creates certain provisions that don’t appear to be enforceable. A lot of advocates for sex workers are saying that ultimately what’s happened is it’s made life much less safe for sex workers.

A final question: In our democracy, we have a number of people, a significant number, who dispute facts that are clearly facts and accept things as facts that are clearly not true. How can a democracy survive that situation long-term, and is there anything that can be done with Section 230 to improve things?

The Supreme Court has clearly stated that lies in and of themselves are protected by the First Amendment. Now, lies that might cause certain harms, such as defamation if it meets that very high bar, are not protected by the First Amendment. A lot of the problems that we’re facing are the lies that probably are going to be protected by the First Amendment. A lot of it is half-truths or really bad interpretations of information.

That’s not something that changing Section 230 is going to fix, because you still have those protections under the First Amendment. I would say that changing Section 230 would actually make those problems even worse, because you’re going to start to provide incentives for platforms to moderate less. And that’s exactly what Congress was trying to avoid when they passed Section 230 in the first place.